Find a PhD opportunity

-

PhD Opportunity

UK Sickness Absence Crisis: Understanding lived experience of younger adults with long-term ill-health to inform policy & management practice

Explore our PhD opportunity at Nottingham Business School: Tackling the UK Sickness Absence Crisis. Apply now!

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/business/uk-sickness-absence-crisis-understanding-lived-experience-of-younger-adults-with-long-term-ill-health-to-inform-policy-and-management-practice

-

Find out about our PhD opportunity in the Nottingham Business School on the Long-Term Socio-Economic Impacts of Fintech.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/business/assessing-the-long-term-socio-economic-impacts-of-fintech-a-longitudinal-study-on-poverty-reduction,-economic-empowerment,-and-financial-inclusion

-

Find out about our PhD opportunity on finance in the Nottingham Business School.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/business/predicting-the-unpredictable-evaluating-the-effectiveness-of-a-new-generation-of-financial-crisis-prediction-models-against-the-issue-of-new-technologies

-

PhD Opportunity

Rethinking models and policies for first and last mile travel – connecting rural areas beyond city boundaries in Leicestershire

Find out more about this PhD on Rethinking models and policies for first and last mile travel – connecting rural areas beyond city boundaries in Leicestershire.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/architecture-design-built-environment/rethinking-models-and-policies-for-first-and-last-mile-travel-connecting-rural-areas-beyond-city-boundaries-in-leicestershire

-

PhD Opportunity

Energy for All: Broadening the benefits of community energy projects for local communities in Leicester, Leicestershire and Rutland

Discover our PhD studentship project in the Collaboratory Research Hub scheme. Supporting communities through partnership, research and collaboration.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/architecture-design-built-environment/energy-for-all-broadening-the-benefits-of-community-energy-projects-for-local-communities-in-leicester,-leicestershire-and-rutland

-

Find out about our PhD opportunity in the Nottingham Business School on Leveraging AI-Driven predictive analytics for talent management.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/business/leveraging-ai-driven-predictive-analytics-for-talent-management-a-framework-for-assessing-skill-capabilities,-identifying-skills-gaps-in-dynamic-work-environments

-

Find out about our PhD opportunity in the Nottingham Business School on the impact of experiential learning on graduate outcomes.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/business/what-form-and-duration-of-experiential-learning-optimises-graduate-outcomes-an-impact-study-of-experiential-learning-opportunities-within-and-beyond-the-he-curriculum

-

Discover our PhD studentship project in the Collaboratory Research Hub scheme. Supporting communities through partnership, research and collaboration.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/social-sciences/co-creating-transformative-and-innovative-strategies-to-improve-social-support,-educational-outcomes,-and-future-opportunities-for-children-and-young-people-in-leicestershire

-

Discover our PhD studentship project in the Collaboratory Research Hub scheme. Supporting communities through partnership, research and collaboration.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/social-sciences/invisible-poverty-and-the-politics-of-survival-examining-the-role-of-social-enterprises-and-community-centred-organisations-in-addressing-poverty-in-leicestershire-and-rutland-communities

-

PhD Opportunity

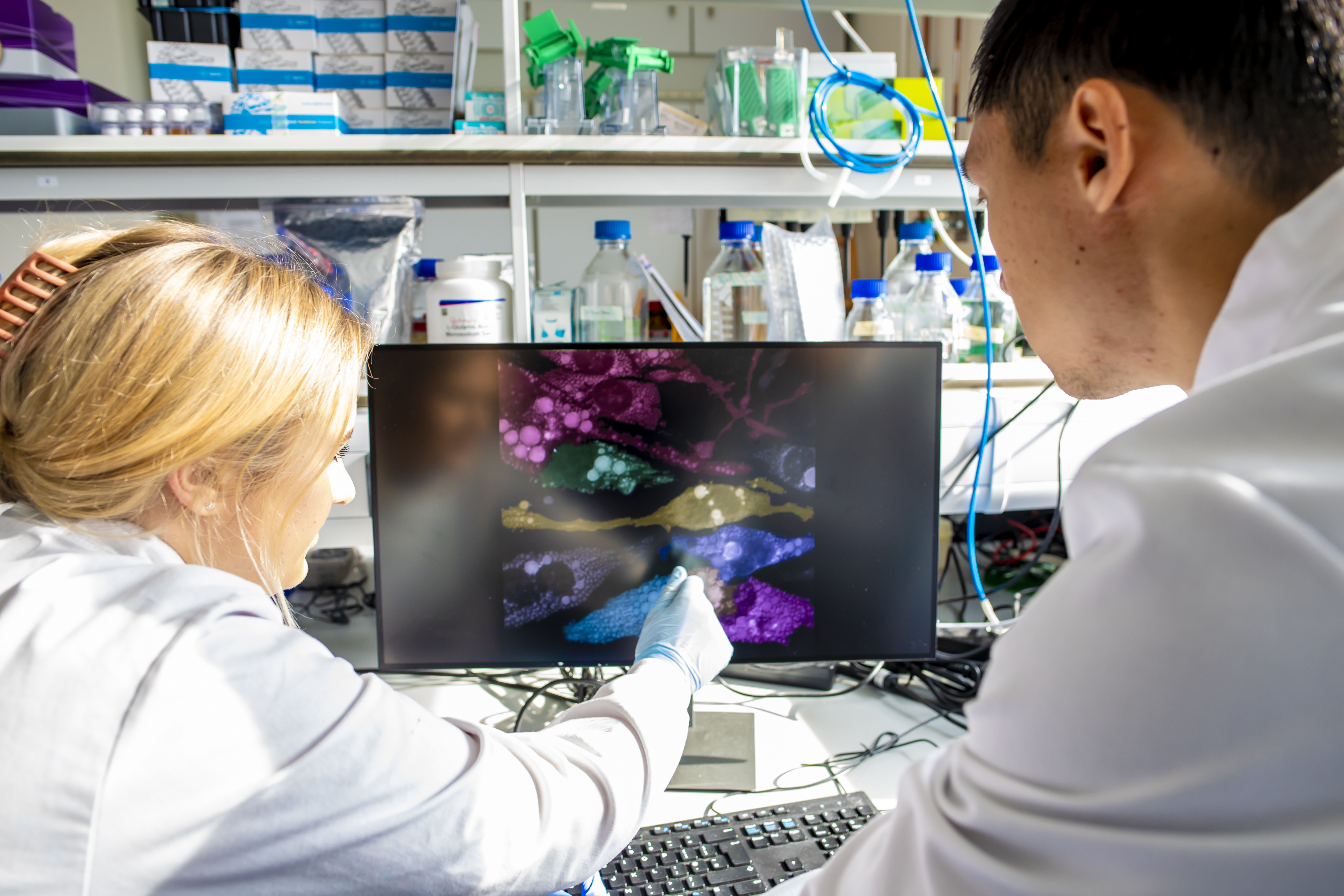

BBSRC Studentships

Nottingham Trent University is part of the Midlands Graduate School, an accredited Economic and Social Research Council (ESRC) Doctoral Training Partnership (DTP). See how the ESRC Doctoral Training Partnership can help support you in your research.

ntu.ac.uk/study-and-courses/postgraduate/phd/phd-opportunities/find-a-phd-opportunity/projects/science-technology/bbsrc-studentships